Top Alternatives to Expensive Web Scraping Tools (2026)

By Ahmad Software

•

May 7, 2026

What do lower-cost scraping tools actually give you?

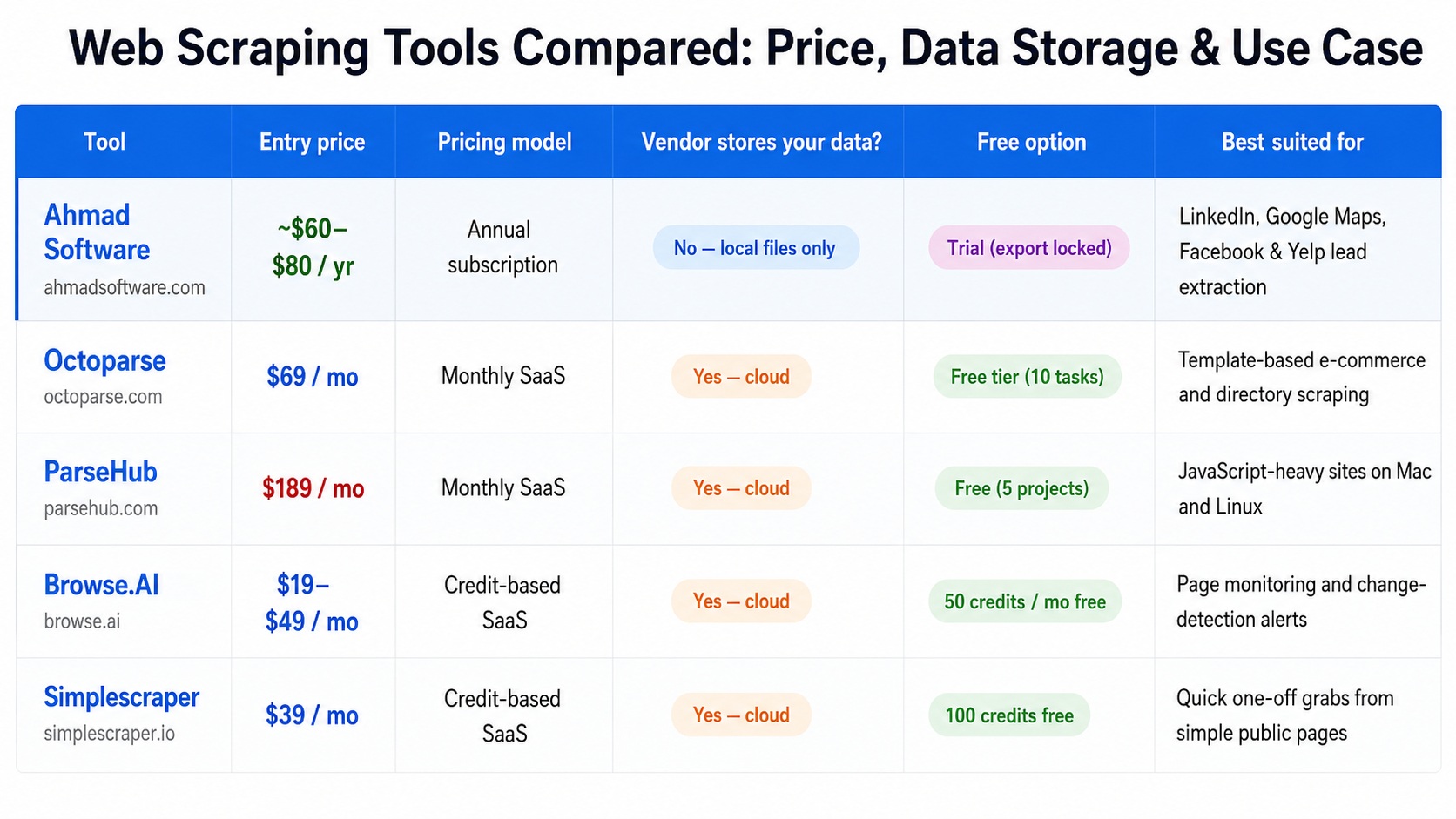

Enterprise scraping platforms routinely cost $500–$2,000 per month. This review looks at five lower-cost tools — Ahmad Software, Octoparse, ParseHub, Browse.AI, and Simplescraper — covering what each one extracts, how it stores data, what it costs annually, and which workflows it actually fits. No rankings, no affiliate links — just a factual comparison for buyers deciding between options.Web Scraping Tools Compared: Price, Data Storage & Use Case

How the web scraping market divides by price

- The first is cloud infrastructure platforms. These run scrapers on remote servers, manage proxy rotation, handle CAPTCHAs, and store the output in the vendor's cloud. They charge monthly because the server infrastructure running continuously on behalf of users costs money continuously. Bright

Data starts at $499/month for most plan types. Apify's free tier offers $5 in monthly credits — enough to test, not enough to run a real workflow.

The second category is desktop-first or extension-first tools aimed at non-technical users. These have lower infrastructure overhead — in some cases, none — and pass that on in the price. The tradeoffs are real: less cloud flexibility, narrower platform coverage, no API for programmatic integration. But for users whose requirements fit within those bounds, the price-to-output ratio is meaningfully different.

Ahmad Software Technologies — desktop lead extraction tools

Ahmad Software Technologies publishes a suite of Windows desktop applications. Each tool is purpose‑built for one source: LinkedIn, Google Maps, Facebook, Yelp, Yellow Pages, and others. Everything runs locally — no data passes through vendor servers.Worth noting:

Each tool is sold separately, but the absence of monthly infrastructure costs makes long‑term use predictable.What the tools extract, by platform

- Google Map Extractor — business name, phone, address, website, email, ratings, reviews, and hours from Google Maps listings.

- LinkedIn Lead Extractor — names, email addresses, phone numbers, job titles, company details, skills, and profile links from publicly available LinkedIn data.

- LinkedIn Sales Navigator Extractor — the same fields, filtered through Sales Navigator's advanced search parameters.

- LinkedIn Recruiter Extractor — profile data accessible through LinkedIn Recruiter.

- Facebook Lead Extractor — names, phone numbers, emails, addresses, websites, follower counts, and likes from Facebook Pages, Places, and Ads.

- Cute Web Email Extractor — email addresses from websites, search engines, and local files (PDF, Word, Excel).

- Cute Web Phone Number Extractor — mobile and landline numbers from the same sources.

- Yelp Extractor — business name, contact details, address, website, and reviews from Yelp listings.

- Yellow Pages Extractor — business contact data from Yellow Pages directories.

- United Lead Scraper — an all-in-one tool covering multiple business directory sites in a single interface.

- Anysite Scraper — a configurable scraper for any website, using a point-and-click interface that generates extraction rules without requiring code.

Octoparse — visual workflow scraper with cloud execution

- Octoparse is a no-code scraping platform that offers both a desktop application and cloud-based

job execution. It has approximately 4.5 million users and a library of over 600 pre-built scraping

templates for common targets including Amazon, LinkedIn, Instagram, Tripadvisor, and Google Maps.

The free tier is more generous than most competitors at this price level — it imposes no hard page-per-run limit on basic tasks, while most other tools cap free usage at 200–500 pages per month.

Paid plans start at $69/month for Standard, rising to $249/month for Professional.

Octoparse's visual workflow builder runs inside its desktop application on Windows. Users configure scraping tasks by navigating to a target page inside the app, clicking elements they want to extract, and letting the auto-detection system build the extraction rules. Configured tasks can be sent to Octoparse's cloud infrastructure to run on a schedule without the local machine staying on.

Worth noting:

Scraped data is stored in Octoparse's cloud environment. Independent reviewers report that stated plan prices often undercount total costs once IP rotation and CAPTCHA-solving add-ons are included. Effective annual cost at Standard is approximately $828/yr before add-ons.ParseHub — cross-platform scraper for JavaScript-heavy sites

- ParseHub is a desktop application available for Windows, Mac, and Linux. Its core technical

differentiation is its rendering engine, which handles JavaScript, AJAX, and dynamically loaded

content more reliably than most no-code scraping tools. For websites built on React, Vue, Angular,

or other frameworks that load content asynchronously, ParseHub handles interactions that simpler

CSS-selector-based tools cannot.

The free tier includes five public projects and 200 pages per run. Paid plans start at $189/month for Standard — making it the most expensive tool reviewed here at the Standard tier at approximately $2,268 per year.

Worth noting:

Reviews consistently identify one common failure mode for new users — after clicking a pagination button, ParseHub requires an explicit step to register it as a page-change action. Missing that step causes the scraper to stop silently, with no error message.This is the most frequently reported source of broken first scrapers. Data is retained on ParseHub's servers.

Browse.AI — monitoring and change detection

- Browse.AI takes a different approach from the other tools in this comparison. Rather than

general-purpose data extraction, it is designed around the specific workflow of watching pages

for changes and being alerted when something updates.

Users train AI robots by demonstrating what to click and collect on a target page. The training process takes five to fifteen minutes for a well-structured page. Once trained, a robot can be set to run on a schedule — hourly, daily, or custom — and notify the user when extracted values change. Pricing is credit-based. The Starter plan offers 50 credits per month at approximately $19/month on annual billing. Data is stored in Browse.AI's cloud and can be piped to Google Sheets, Airtable, or 7,000+ other integrations.

Worth noting:

Browse.AI is purpose-built for monitoring rather than bulk extraction. Users who want to pull thousands of contact records at once will find it less suited to that workflow than lead-gen-focused tools. The credit model means costs scale with monitoring frequency.Simplescraper — Chrome extension for quick extractions

- Simplescraper is a Chrome browser extension with over 90,000 downloads. It requires no

installation beyond the extension itself and allows users to click on page elements to define

extraction rules within the browser. Captured data can be exported to CSV or synced to Google

Sheets and Airtable on paid plans.

The free tier includes 100 cloud credits and unlimited local scraping. The Pro plan at $70/month adds cloud scheduling and real-time spreadsheet sync. An AI-assisted smart-extract endpoint on paid plans generates CSS selectors from an unfamiliar page structure automatically.

Worth noting:

Simplescraper's extraction relies on CSS selectors, which break when target sites update their layouts. The tool gets blocked quickly on sites with aggressive anti-bot protection — Amazon, Yelp, and LinkedIn are cited consistently in user reports. No proxy rotation is included below the $150/month Premium plan.Which Tool Fits Which Task

The five tools in this comparison do not compete directly with each other in all cases.The decision usually comes down to what data is needed, how often it is needed, and whether the workflow requires cloud execution or can tolerate a desktop-only setup.

Task-to-Tool Mapping

- Ahmad Software

Extracting contact lists from LinkedIn, Google Maps, Facebook, or Yelp for sales or recruitment outreach

Octoparse

Recurring scrapes of e-commerce sites, directories, or structured data sources using existing templates

ParseHub

Scraping JavaScript-heavy or AJAX-dependent sites on Mac or Linux where other visual tools fail

Browse.AI

Monitoring specific pages for changes — competitor pricing, job board updates, stock availability alerts

Simplescraper

Quick, one-off data grabs from simple public web pages without any installation or setup